Boost LLM Efficiency: Google's TurboQuant Cuts Memory Usage by 6x

Google's new TurboQuant algorithm significantly reduces the memory footprint of large language models, accelerating AI performance without sacrificing accuracy.

Google Research has unveiled a groundbreaking compression algorithm called TurboQuant that can dramatically reduce the memory usage of large language models (LLMs) by up to 6 times, while also boosting speed and maintaining accuracy.

LLMs, the AI models powering advanced language tasks like natural language processing and generation, are notorious for their insatiable memory requirements. The key-value cache, which stores important information to avoid repeated computations, is a major culprit behind this memory consumption. TurboQuant aims to address this challenge by compressing this cheat sheet-like cache without compromising performance.

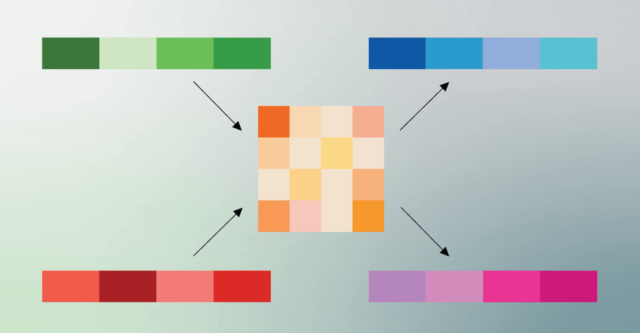

LLMs rely on high-dimensional vectors to map the semantic meaning of tokenized text. These vectors, which can have hundreds or thousands of embeddings, are used to describe complex information like image pixels or large datasets. However, they also occupy a significant amount of memory, inflating the size of the key-value cache and limiting the models' efficiency.

To make models smaller and more efficient, developers often employ quantization techniques to reduce the precision of these vectors. TurboQuant takes this concept a step further, introducing a novel compression algorithm that can shrink the key-value cache by up to 6 times without sacrificing the accuracy of the language model.

This breakthrough has significant implications for the future of AI. As LLMs continue to grow in size and complexity, the ability to dramatically reduce their memory footprint could unlock new frontiers in AI performance, making it more accessible and scalable across a wide range of applications.

The TurboQuant algorithm works by intelligently compressing the key-value cache, leveraging advanced techniques to maintain the essential information while drastically reducing the overall memory requirements. This innovation not only boosts the efficiency of the models but also paves the way for more powerful and accessible AI-driven solutions in the future.

As the demand for high-performance, memory-efficient AI continues to grow, Google's TurboQuant stands out as a groundbreaking contribution that could redefine the landscape of generative AI and beyond.

Source: Ars Technica