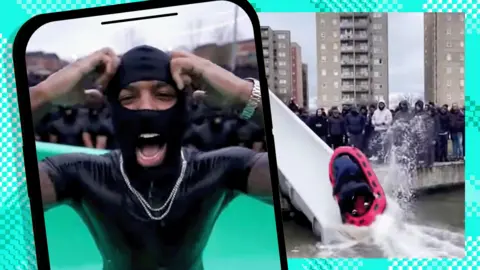

Fake AI Videos of UK Urban Decay Flood Social Media

Deepfake videos depicting deteriorating UK public facilities are spreading across social platforms, sparking racist reactions and misinformation concerns.

A disturbing trend has emerged across social media platforms as artificial intelligence-generated videos depicting fictional scenes of urban decay in the United Kingdom continue to proliferate at an alarming rate. These sophisticated deepfake productions showcase imaginary scenarios of deteriorating public infrastructure, abandoned taxpayer-funded facilities, and dystopian urban landscapes that bear little resemblance to reality yet are convincing enough to fool casual viewers.

The deepfake videos primarily focus on public amenities such as waterparks, swimming pools, community centers, and recreational facilities that appear to be in states of severe disrepair. These AI-generated clips show crumbling concrete structures, stagnant water filled with debris, broken tiles, and overgrown vegetation reclaiming what were supposedly once-thriving public spaces. The level of detail in these fabricated scenes is remarkably sophisticated, incorporating realistic lighting, weather effects, and environmental textures that make them appear authentic to unsuspecting viewers.

What makes these AI-generated videos particularly concerning is their rapid spread across multiple social media platforms, including TikTok, Twitter, Facebook, and Instagram. The algorithmic nature of these platforms tends to amplify content that generates strong emotional responses, and these fake videos of urban decline certainly achieve that objective. Users share them with captions expressing outrage about government spending, failed public policies, and societal breakdown, often without questioning their authenticity or investigating their origins.

The creation and distribution of these fake AI videos appears to be part of a coordinated effort to manipulate public opinion about urban development, government spending, and social policies in the UK. Many of the videos are accompanied by misleading captions that claim to show the results of specific political decisions or the current state of particular neighborhoods, despite being entirely fabricated. This misinformation campaign exploits people's existing concerns about public spending and urban development to spread false narratives.

Perhaps most troubling is the way these deepfake videos have become vehicles for racist commentary and discriminatory rhetoric. The comment sections beneath these viral clips are frequently filled with inflammatory responses that blame specific ethnic communities, immigrants, or minority groups for the fictional decay depicted in the videos. This racist backlash demonstrates how sophisticated AI-generated content can be weaponized to promote divisive ideologies and reinforce harmful stereotypes about different communities.

Social media users who encounter these videos often react with genuine shock and dismay, believing they are witnessing real evidence of societal breakdown or failed government policies. The emotional impact of seeing what appears to be taxpayer money wasted on crumbling infrastructure triggers strong reactions that users feel compelled to share with their networks. This viral mechanism ensures that the AI-generated misinformation reaches increasingly large audiences, amplifying its potential for harm.

The technical sophistication of these deepfakes represents a significant advancement in AI video generation technology. Unlike earlier examples of synthetic media that contained obvious visual artifacts or inconsistencies, these new productions demonstrate improved quality in terms of texture rendering, lighting consistency, and temporal coherence. The creators appear to be using advanced generative adversarial networks (GANs) or similar machine learning models trained on extensive datasets of real urban environments and decay scenarios.

Experts in synthetic media detection warn that identifying these AI-generated videos is becoming increasingly difficult for average users. Traditional telltale signs of deepfake content, such as unnatural facial movements or inconsistent lighting, are less relevant when the videos focus on inanimate objects and environmental scenes. The lack of human subjects in many of these videos eliminates some of the most reliable detection methods that researchers and fact-checkers typically employ.

The phenomenon reflects broader challenges facing society as AI technology becomes more accessible and sophisticated. The tools required to create convincing deepfake videos are no longer limited to well-funded organizations or highly technical specialists. Consumer-grade software and cloud-based AI services have democratized the creation of synthetic media, making it possible for individuals with modest technical skills and limited resources to produce convincing fake content.

Government officials and media literacy advocates are expressing growing concern about the potential impact of this AI-generated disinformation on public discourse and democratic processes. When citizens base their political opinions and voting decisions on false information that appears authentic, the integrity of democratic institutions can be undermined. The viral nature of these fake videos means that corrections and fact-checks often fail to reach the same audiences that viewed the original misleading content.

Social media platforms are struggling to develop effective responses to this new form of synthetic media manipulation. Traditional content moderation approaches that rely on user reporting and human reviewers are insufficient for addressing the scale and sophistication of AI-generated misinformation. Automated detection systems are still in their infancy and often fail to identify convincing deepfakes, particularly those that don't feature human subjects.

The creators of these deepfake videos often operate anonymously and use techniques to obscure their identities and motivations. Some appear to be motivated by political agendas, while others may be seeking to generate engagement and revenue through viral content. The international nature of social media platforms makes it difficult for authorities to investigate and prosecute those responsible for creating and distributing misleading AI-generated content.

Educational institutions and media literacy organizations are working to develop new curricula and resources to help people identify synthetic media and think critically about the content they encounter online. However, the rapid pace of technological advancement means that detection techniques and educational materials often lag behind the latest deepfake capabilities. Teaching people to question the authenticity of seemingly realistic videos represents a fundamental shift in how we approach media consumption.

The psychological impact of exposure to these fake videos should not be underestimated. Even when people later learn that content was artificially generated, the initial emotional response and mental images can persist and influence their perceptions of reality. This phenomenon, known as the "illusory truth effect," means that repeated exposure to false information can make it seem more credible over time, regardless of its actual accuracy.

Researchers studying the spread of AI-generated misinformation note that these videos exploit existing social anxieties about urban decline, government spending, and community safety. By presenting fictional scenarios that align with people's existing fears and preconceptions, the creators increase the likelihood that viewers will accept the content as authentic and share it with others who hold similar concerns.

The international implications of this trend extend beyond the UK, as similar tactics could be employed to manipulate public opinion in other countries. The techniques used to create these convincing fake videos of urban decay could easily be adapted to target different nations, communities, or political systems. This represents a new frontier in information warfare and propaganda that requires coordinated international responses.

Source: BBC News