BBC Reporter Hacked Through AI Coding Platform Flaws

Security vulnerabilities in popular AI coding tools expose users to hacking risks, as demonstrated when a BBC journalist fell victim to platform flaws.

The rapidly expanding world of AI coding platforms has revealed significant security vulnerabilities that pose serious risks to users, as dramatically illustrated when a BBC reporter became the victim of a sophisticated hacking attempt through these very tools. The incident highlights growing concerns about the security infrastructure of platforms designed to democratize app development for non-technical users. As these no-code AI tools gain massive traction across industries, cybersecurity experts are raising alarm bells about potential exploitation vectors that could affect millions of users worldwide.

The affected journalist was using one of the popular visual coding platforms that have surged in popularity over the past year, allowing individuals without programming backgrounds to create functional applications through artificial intelligence assistance. These platforms typically use drag-and-drop interfaces combined with AI-powered code generation to transform user ideas into working software. However, the incident revealed that the underlying security protocols of such platforms may not be robust enough to protect users from malicious actors who understand how to exploit the AI-driven development process.

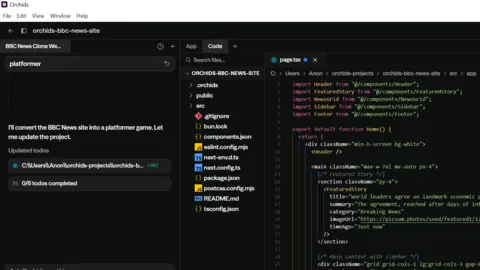

Security researchers who analyzed the hack discovered that the vulnerability stemmed from inadequate input validation within the AI code generation system. The platform's artificial intelligence was designed to interpret natural language requests and convert them into functional code, but it lacked sufficient safeguards to prevent the injection of malicious commands disguised as legitimate development requests. This allowed attackers to essentially trick the AI into generating code that could access sensitive user data and system resources beyond the intended scope of the application being developed.

The cybersecurity implications of this incident extend far beyond a single compromised account. Industry analysts estimate that over 15 million users worldwide are now actively using various AI-powered coding platforms, with adoption rates increasing by approximately 300% year-over-year. Major platforms in this space include established players like Microsoft's Power Platform, Google's AppSheet, and numerous startups that have collectively raised billions in venture capital funding. The accessibility and ease of use that make these platforms attractive to non-technical users also create a broader attack surface for cybercriminals.

Cybersecurity firm CyberArk's latest research indicates that AI-powered development tools represent a new category of security risk that traditional cybersecurity frameworks haven't adequately addressed. The firm's chief technology officer explained that these platforms create unique challenges because they blur the lines between user input and executable code, making it difficult to implement conventional security measures without hampering the user experience that makes these tools valuable in the first place.

The BBC reporter's experience serves as a cautionary tale for the broader adoption of these technologies. According to sources familiar with the incident, the attack began when the journalist attempted to create a simple data visualization app using the platform's AI assistant. The attacker had apparently discovered a method to embed malicious instructions within seemingly innocent development prompts, causing the AI to generate code that established unauthorized network connections and transmitted sensitive information to external servers.

Following the incident, several major AI coding platform providers have announced immediate security audits and enhanced protection measures. The platforms are implementing improved input sanitization, enhanced monitoring of AI-generated code, and stricter permissions models that limit the system access granted to applications created through their tools. However, security experts warn that these measures may only address known attack vectors, while the rapidly evolving nature of AI technology could introduce new vulnerabilities faster than they can be identified and patched.

The economic impact of these security concerns is already becoming apparent in the market. Venture capital firms that have heavily invested in the no-code and AI coding space are reportedly conducting emergency security assessments of their portfolio companies. Several enterprise clients have temporarily suspended their use of certain platforms pending comprehensive security reviews, potentially affecting the revenue projections of companies in this rapidly growing sector.

Dr. Sarah Chen, a cybersecurity researcher at Stanford University who specializes in AI security vulnerabilities, emphasized that this incident represents just the beginning of a new era of cyber threats. Her research team has identified over 20 different potential attack vectors specific to AI coding platforms, ranging from prompt injection attacks to model manipulation techniques that could compromise the integrity of generated code at scale.

The regulatory landscape surrounding AI development tools is also evolving in response to these security challenges. The European Union's proposed AI Act includes specific provisions for AI-powered software development tools, requiring enhanced transparency and security measures for platforms that enable non-experts to create applications. Similar regulatory discussions are underway in the United States, with Congressional committees examining whether existing cybersecurity frameworks are adequate for governing AI-powered development platforms.

Industry leaders are calling for the establishment of standardized security protocols specifically designed for AI coding platforms. The proposal includes mandatory security audits, standardized vulnerability disclosure processes, and industry-wide sharing of threat intelligence related to AI-specific attack methods. However, implementing such standards across the diverse ecosystem of AI coding tools presents significant technical and logistical challenges.

The incident has also sparked broader discussions about the trade-offs between accessibility and security in software development. While democratized coding tools have enabled countless individuals and small businesses to create valuable applications without extensive technical expertise, the security risks may require a fundamental rethinking of how these platforms balance ease of use with robust protection measures.

As the investigation into the BBC reporter's hack continues, cybersecurity professionals are using the incident as a case study to develop better protection strategies for AI coding platforms. The lessons learned from this breach are expected to influence the development of next-generation security frameworks specifically designed to address the unique challenges posed by artificial intelligence in software development environments, ultimately helping to ensure that the democratization of coding doesn't come at the expense of user security and privacy.

Source: BBC News