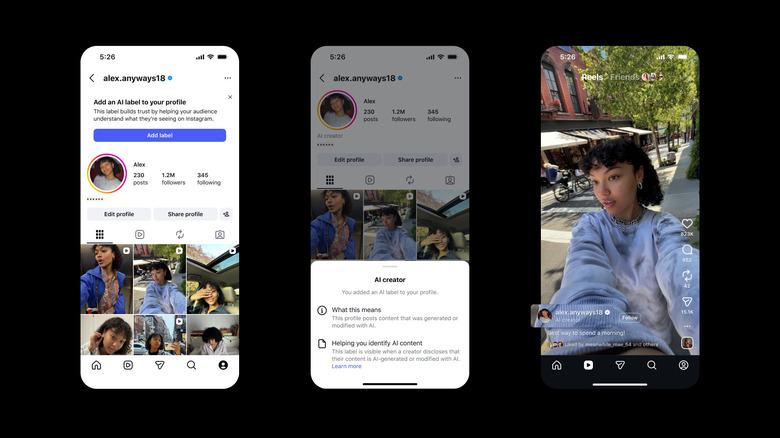

Instagram Tests Optional AI Creator Labels

Instagram rolls out voluntary AI creator labels to help users identify accounts posting generated content. Learn about this new transparency feature.

Instagram is introducing a new initiative designed to enhance transparency in its creator ecosystem by testing optional AI creator labels that accounts can voluntarily adopt. The social media platform, owned by Meta, is actively encouraging creators who frequently produce and share generative artificial intelligence content to leverage this feature, though participation remains entirely voluntary rather than mandatory. This strategic approach reflects the platform's nuanced stance on balancing innovation with user awareness regarding the prevalence of AI-generated material on the network.

The rollout of these optional labels comes at a time when artificial intelligence-generated content is becoming increasingly sophisticated and widespread across social media platforms. Instagram's decision to make the labels voluntary rather than compulsory suggests the company is taking a cautious approach to implementation, allowing creators the autonomy to decide whether they want to publicly identify their use of generative AI technology. This flexibility may help ease concerns from creators who worry about potential negative impacts on their audience engagement or algorithmic visibility.

The feature aims to address growing concerns from both users and regulators about the proliferation of AI-generated content on social platforms without clear disclosure. By providing creators with transparency tools, Instagram is attempting to foster greater trust within its community while demonstrating corporate responsibility in the age of rapidly advancing AI capabilities. The initiative reflects broader industry conversations about how platforms should handle and disclose artificially generated content.

The testing phase represents an important step in content authenticity efforts across the social media landscape. Rather than implementing strict enforcement mechanisms that could alienate creators or stifle innovation, Meta is opting for an educational and incentive-based approach. The company is encouraging participation by framing the labels as a way for creators to be upfront with their audiences about their creative processes and tools they employ in content production.

According to industry analysts, this voluntary approach could yield valuable data about creator adoption rates and user reception of AI-generated content when properly labeled. Meta can use this information to refine its policies and potentially develop more sophisticated detection mechanisms for AI-generated media in the future. The testing phase also allows the company to gather feedback from various stakeholder groups, including creators, users, and advocacy organizations focused on digital literacy and content authenticity.

The implications of this move extend beyond Instagram's platform, potentially setting precedents for how other social media companies approach AI content disclosure. As platforms grapple with the challenge of maintaining user trust while embracing new technologies, Instagram's balanced approach demonstrates one possible path forward. The voluntary labeling system could become a template for industry-wide standards if it proves effective at increasing awareness without significantly disrupting creator activity.

Creators using the AI labels will be better positioned to communicate their creative methodology directly to their audiences. This transparency can actually benefit creators who leverage AI tools creatively, as it allows them to demonstrate their technical sophistication and explain how they use these tools as part of their artistic or commercial process. Some creators argue that being upfront about AI usage can build credibility and trust with audiences who appreciate innovation and efficiency in content creation.

The testing period will likely reveal important insights about user behavior and preferences regarding AI-generated content. Research shows that audiences have varying levels of comfort with AI involvement in content creation, and being transparent about usage could actually enhance engagement among certain demographic groups. Understanding these nuances will be crucial for Instagram as it develops its long-term strategy for managing synthetic media on its platform.

Meta's approach also reflects the current regulatory environment surrounding artificial intelligence and digital content. Various governments and regulatory bodies are increasingly scrutinizing how platforms handle and disclose AI-generated material, particularly in sensitive areas like news, political content, and advertising. By proactively implementing voluntary labeling features, Instagram may be positioning itself favorably in ongoing regulatory discussions about platform responsibility and transparency standards.

The implementation of these labels also raises important questions about the distinction between human-created and AI-assisted content. Many creators use AI tools as part of a hybrid workflow that combines human creativity with artificial intelligence capabilities. The voluntary labeling system will need to accommodate this nuanced reality, where creation is neither purely human nor purely artificial, but rather a collaborative process between human creators and algorithmic tools.

Looking ahead, the success of Instagram's optional AI creator labels will depend on several factors, including creator adoption rates, user engagement with labeled content, and the effectiveness of the feature in building trust within the community. Meta will need to monitor how the labels affect content visibility, engagement metrics, and creator behavior patterns. This data will inform whether the voluntary approach should be maintained, refined, or potentially replaced with more mandatory disclosure requirements in the future.

The broader context of this initiative underscores the evolving relationship between creators, platforms, and audiences in an era of advancing AI technology. As generative AI becomes increasingly accessible and capable, social media platforms face mounting pressure to maintain transparency and user trust. Instagram's testing of optional labels represents a measured response to these pressures, demonstrating the company's commitment to addressing community concerns while avoiding heavy-handed restrictions that could limit creator expression and innovation.

In conclusion, Instagram's rollout of optional AI creator labels marks a significant moment in the platform's evolution as it navigates the intersection of artificial intelligence and authentic human expression. By choosing to encourage rather than mandate the use of these labels, the company is taking a pragmatic approach that respects creator autonomy while promoting greater transparency and awareness. As this feature continues through its testing phase, it will likely provide valuable insights that shape how the broader social media industry handles AI-generated content disclosure in the years to come.

Source: Engadget